Evaluate the Information Architecture of the B2B dashboard and learn how partners interact with it.

📌 Project scope:

Client: blankt

Timeframe: 3 weeks | May | 2023

My role: UX Researcher intern

Team: Product owner, developers, and one UX/UI Designer.

Methods: hybrid card sorting, tree testing and user testing.

Tools: Optimal Workshop, Google forms, Figma, Figjam and Trello.

Methodology: User testing

Evaluative research project impact

The context

Research Goals

The process

blankt is a start up on a mission to make everyone an artist. Through their design engine, which enables people to create art without requiring previous artistic skills. Their focus is to help retailers offer their customers the ability to create unique designs on their products. Therefore, reducing the need for large collections, warehouses, and long-distance shipping, while enhancing customers' overall experience with a more enjoyable and personalized touch.

My process involved following the Double Diamond design approach. Additionally, I documented and shared research findings, hypotheses, and design opportunities with the team, as this facilitated an iterative design process.

The new B2B dashboard does not incorporate input from B2B partners.

The methodology

To evaluate the Information Architecture (IA) of the new dashboard, I have selected two methodologies: hybrid card sorting and tree testing. Moreover, to gain a deeper understanding of how B2B partners engage with the interface, where they encounter difficulties, and to uncover future usability issues, I decided to observe them interacting whilst accomplishing tasks in a moderated usability testing.

Since card sorting is a valuable method for defining and testing the architecture of new websites and digital products, it would allow me to quickly and effectively understand how users would categorize information and assess the relevance of the provided content.

Tree testing, on the other hand, would help me evaluate the hierarchy and findability of topics in the dashboard.

Once I was done with conducting the card sorting, tree testing and user tests I could start synthesising and clustering all the gathered information in order to generate actionable insights based on partners’ feedback. The main insights from the card sorting and tree testing were:

💡Insight 1

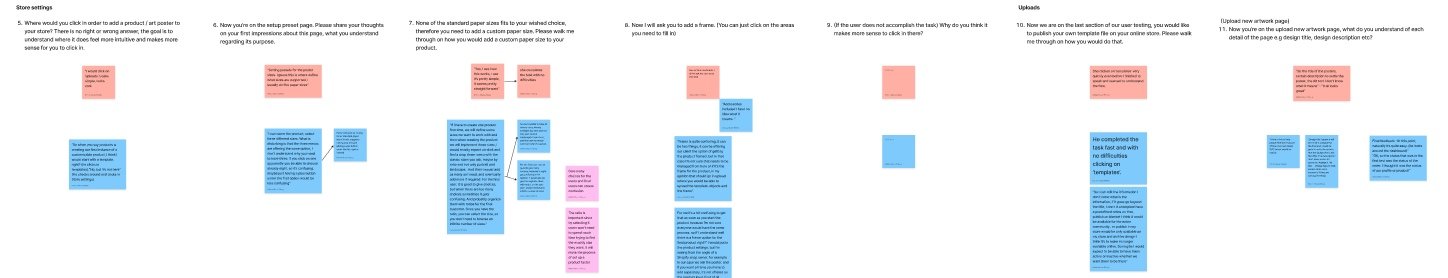

After conducting both user test I transcribed the content to FigJam. I clustered answers with similarities into themes and used different colors for identifying each partner. The yellow stick-notes are the insights I extracted from each theme.

The main insights, design opportunities and hypotheses were:

The final deliverables included a research report with main research findings, insights, design opportunities, hypotheses, main quotes from B2B partners to support the presented data, and a presentation tailored for stakeholders.

I have received positive feedback from stakeholders, both regarding the presentation and my professionalism when conducting user testing with the B2B partners from the CEO of blankt group AB.

As this marked the conclusion of my internship's last user research project, I was unable to follow up. Nevertheless, the next steps involve validating the formulated hypotheses through user testing with B2B partners.

The design iterations, made based on actionable insights, enabled the launch of the new B2B dashboard to be grounded in data-driven design decisions. B2B partners are expected to be able to efficiently and easily use and navigate the new B2B dashboard based on the design iterations made from actionable insights.

The project also uncovered a new research opportunity regarding the sales feature of the dashboard, as the research findings showed that this is the most important and used feature by B2B partners. It prevented higher delivery costs to B2B partners and uncovered a potential solution that will allow B2B partners to have control over their orders and provide accurate delivery costs to their customers.

💭 Reflection

• This project reflects some of the various research methodologies that I conducted during my internship as a UX researcher. It was my first time managing a user research study with B2B partners in a professional setting. The experience led me to contemplate the importance of discerning when to apply each method to extract valuable data effectively.

• The card sorting and tree testing findings revealed the significance of a clear and intuitive information architecture, especially in helping me identify ambiguous elements.

• Importance of User Feedback: Actively listening to user feedback, whether through card sorting or tree testing, is central to creating user-centered designs that resonate with the audience.

• Observing B2B partners interacting with the dashboard provided me with a deeper understanding of potential usability issues and also confirmed the ambiguity found in a category during the card sorting and tree testing.

• I believe these research practices were extremely valuable in assisting developers and UX designers in building a user-centered digital product and contributing to an iterative design. All methods emphasized the need to iterate the design based on users' feedback, expectations, and requirements.

• Additionally, adaptability emerged as a key attribute when coordinating and conducting research, particularly within an agile framework characterized by sprints and time constraints.

💡Insight 2

In tree testing, B2B partners found "Contact Us" and "Need Help" intuitive in the "Integration settings" category. However, during card sorting, there was some variation, with partners also associating these cards with "Store settings." This suggests a need for clear labeling and potentially offering these features in both categories to accommodate varying their expectations.

💡Insight 4

In tree testing, B2B partners found it highly intuitive to place "Coupon and gift cards" under "Sales," with no consideration for other options. This was corroborated during card sorting, where two users also intuitively categorized them under "Sales," indicating a strong alignment between user expectations and this category.

I conducted two rounds of research involving five B2B partners. In the first round, I used Optimal Workshop to perform unmoderated hybrid card sorting. This method was chosen because the dashboard was in the development stage, and it would allow partners not only to sort cards into created categories, but also create their own. The study was divided into two steps:

• Sort the cards provided into categories + Create new cards

• Categorize the cards as most important, somewhat important, or not at all important

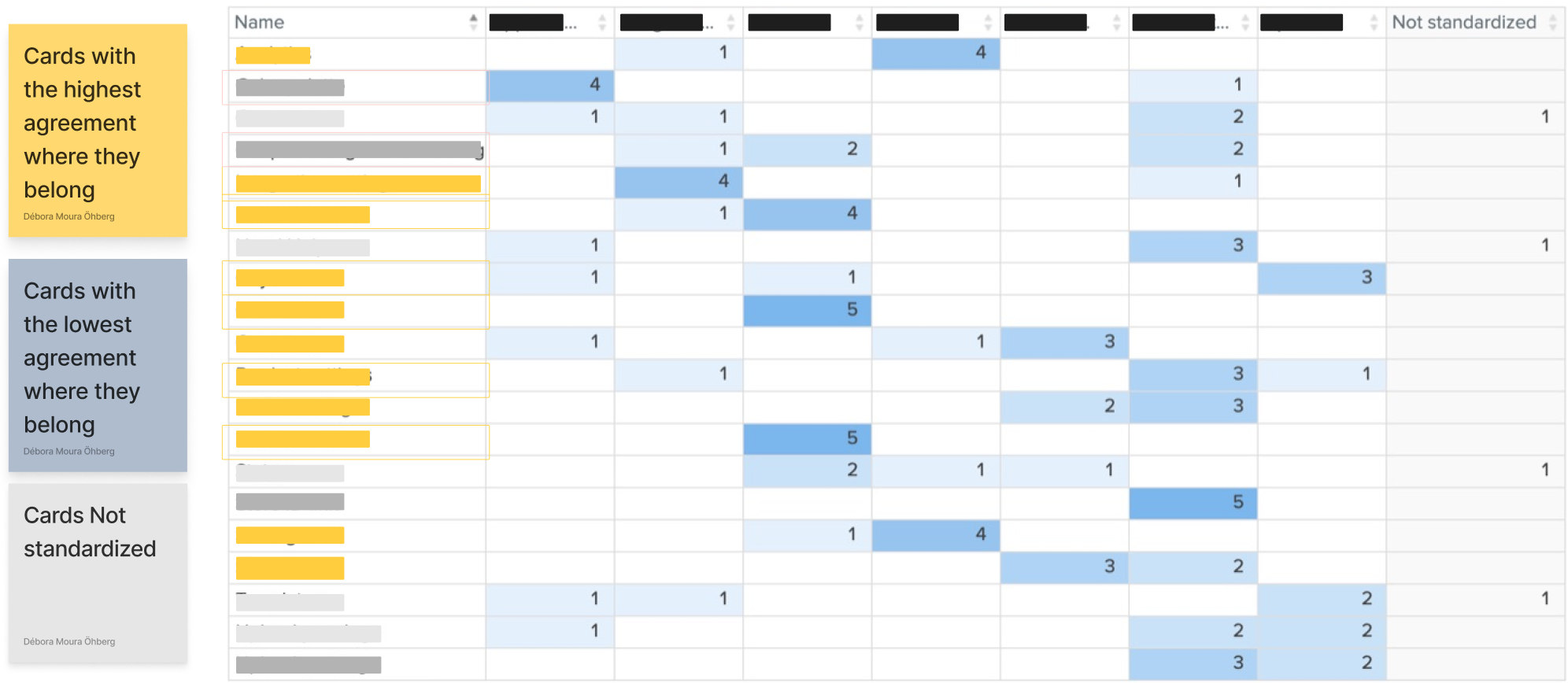

• Which cards had varying interpretations among B2B partners, indicating ambiguity?

• Which cards are of the highest importance and should be prioritized on the dashboard?

• Which cards had the highest ranking on their categorization?

• Which cards had the lowest ranking on their categorization?

• What are the most popular groups?

Methodology: Tree testing

I also conducted a tree testing to understand cards with low agreement in the card sorting and to understand why B2B partners chose the selected options. The study was conducted through Google Forms, each question contained the following tasks:

As my goal included also to gain insights and a deep understanding of how partners interact with the dashboard, I conducted two moderated user tests with two of our current B2B partners. While the recommended practice is to test with at least three users, I conducted these tests with only two due to time constraints and recruitment challenges. It was valuable to me to see them interacting and hear their requirements, needs and general feedback about the dashboard.

✏️ Note: To have a better structure and to be easier when synthesizing the data, I divided the tasks and questions of the user test into four categories.

⒈ Where would you find [specific card] in the dashboard?

( ) Integration settings

( ) Statistics

( ) Store settings

⒉ Why did you select this option? (Open-ended question)

⒊ Was there any other option you considered selecting instead?

Evaluative Research [Part 2] - Usability and interaction

Discover

Evaluative Research [Part 1] - Information architecture

Methodology: Hybrid Card Sorting

While extracting the data from the card sorting, I was looking into:

Define

Evaluative Research [Part 1] - Analysis & Synthesis

During tree testing B2B partners favored "Appearance settings" category for the "Color Palette" card, while in the card sorting the majority of users placed it under "Store settings." This points to potential ambiguity that requires investigation for better information architecture and user experience

💡Insight 3

B2B partners showed uncertainty in categorizing "Uploads settings" during both tree testing and card sorting, considering it could fit in "Uploads" or "Store settings." Notably, none placed it in "Integration settings," emphasizing the need for clearer categorization and guidance.

💡Insight 5

In tree testing, B2B partners associated "Store ID-link" with "Store settings" but were open to "Integration settings" as an alternative. However, during card sorting, all users unanimously placed it in "Store settings," highlighting a strong and clear association with this category.

Hybrid card sorting

Tree testing

User testing